Why We Priced Radar Per Cluster, Not Per Seat

Per-seat pricing punishes a visibility tool's whole point: more eyes. The five pricing models we compared, why per-cluster won, where it's a worse deal.

A platform lead we talked to last month runs a fleet of nine clusters with a team of twelve. Their incumbent "Kubernetes management" vendor quoted her $1,500 per seat per year. Twelve seats. $18,000. But that number only covers the twelve people on her team today. The on-call engineer from the partner team who gets paged once a quarter? Another seat. The security reviewer who wants to audit the cluster once a year? Another seat. The CFO who wanted to see what they were paying for? You can imagine.

She did the thing every sensible engineering leader does: bought six seats, shared logins with the rest of the team, and disabled audit logs so it wouldn't show up in reviews.

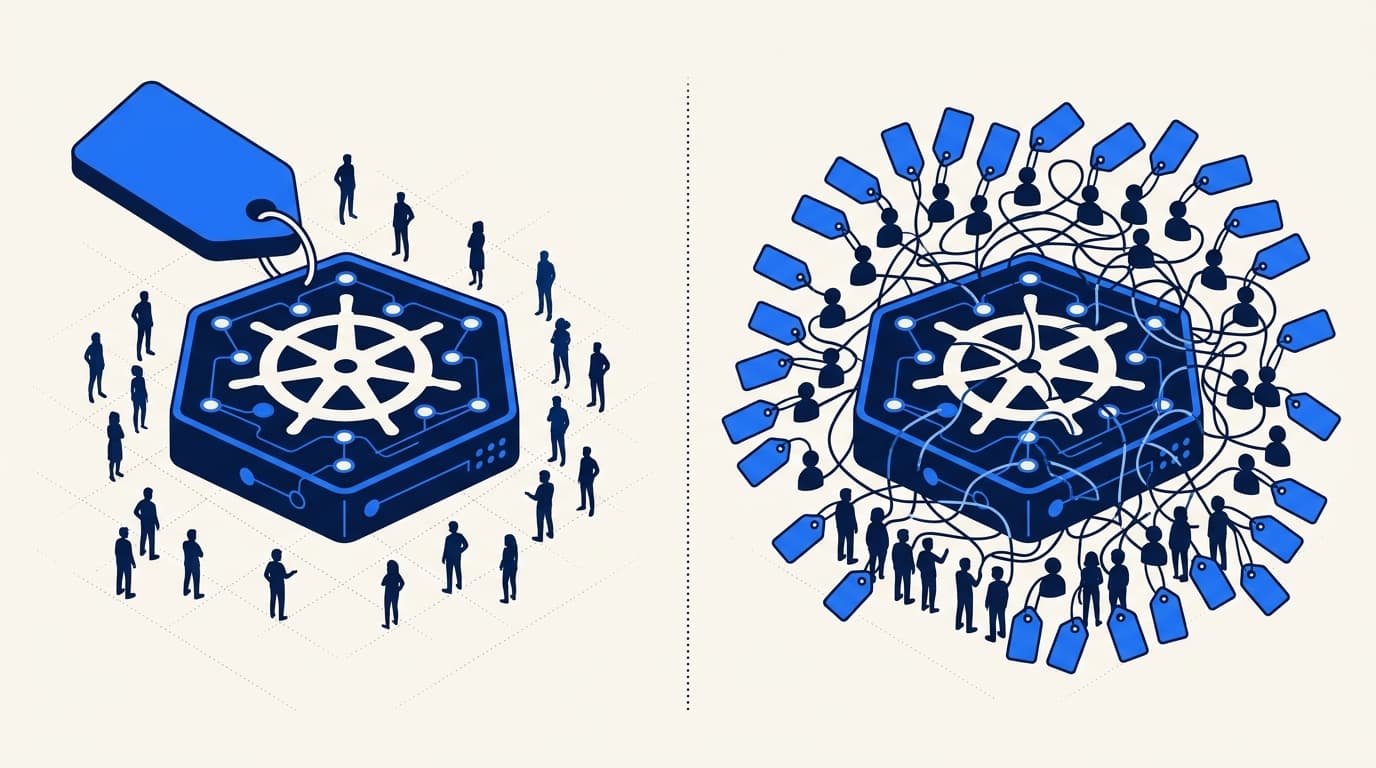

That's the outcome per-seat pricing creates. Not "more revenue per customer." Shared logins, broken audit trails, and a vendor that's quietly hostile to the job it's sold to do.

When we priced Radar Cloud, we ruled out per-seat on day one. Here's the rest of the thinking.

TL;DR

Radar Cloud is priced per cluster, not per seat. Free for up to three clusters. $99 per cluster per month on Team. Custom on Enterprise.

A visibility tool gets more valuable the more people look at it, so pricing that punishes adoption is pricing at war with the product. Per-cluster aligns with our actual costs (we run one tunnel and one cluster's worth of registry / audit / billing state per connected cluster) and with the thing you care about (how much infrastructure you're monitoring).

It's not the right model for every customer - we'll be honest about that below.

The five models we compared

Every pricing decision is a choice between bad options. We sketched five on a whiteboard.

| Model | Scales with | Predictable? | Fair at growth? | Hostile to adoption? |

|---|---|---|---|---|

| Per seat | Team size | Yes | No | Yes |

| Per cluster | Fleet size | Yes | Mostly | No |

| Per node | Node count | Sometimes | No | No |

| Per event / per GB | Cluster chaos | No | No | No |

| Flat tiers by feature | Whatever we pick | Yes | Depends | Neutral |

Each has a real argument for it. Here's why we landed where we did.

Why not per seat

The pitch for per-seat is simple: it's how most SaaS is priced, buyers understand it, and your revenue scales with the customer's team. The problem is that it scales against what Radar is actually for.

A visibility tool is worth more when more people can look at it. The on-call engineer who didn't deploy the thing that broke at 3am needs the dashboard more than the engineer who did. The security reviewer running a quarterly check. The manager who wants to see cluster health before a steering committee. The new hire trying to build a mental model of prod.

Per-seat pricing turns every one of those people into a line item someone has to justify. The outcomes we saw in the wild:

- Shared logins. We've interviewed teams who share a

devops@company.comcredential across ten people because the seat math doesn't work otherwise. Audit logs turn into "devops@ did it." - Procurement friction for new hires. Onboarding a new engineer should be a

helm installand a Slack invite. Not a purchase order. - The "we forgot about bob" bill. Someone leaves, nobody remembers to deprovision, and the renewal has line items for people who haven't logged in for a year.

The worst part: per-seat incentivizes us to make the tool harder to use without an account. The opposite of the thing we want.

Why not per node

Per-node sounds like it tracks infrastructure scale. It doesn't.

We ran the numbers on a hundred real clusters from our beta cohort. The 90th-percentile cluster had 47 nodes. The 99th had 340. Meanwhile the p50 cluster had 9 nodes. Under per-node pricing, a customer with one beefy 200-node cluster running a correctly-tuned HPA would pay the same as one running ten small-and-wasteful 20-node clusters. That's backwards. The HPA team is doing the right thing.

Our cost of running Radar Cloud doesn't scale linearly with node count either. Radar watches the Kubernetes API server, not the nodes. Watching a 50-node cluster and a 500-node cluster costs us roughly the same in tunnel bandwidth and control-plane state - the object count grows sublinearly with nodes for most workloads. Per-node would punish customers for infrastructure they don't care about.

Why not per event or per GB

This is the Datadog model and it's the one that produces the worst end-of-quarter conversations in our industry.

The whole point of Radar is to surface the events you didn't know about. If those events cost you money, you're incentivized to drop your retention, mute your alerts, and generally let less signal through. We want exactly the opposite behavior. A pod in CrashLoopBackOff should get more eyes on it, not less, and the billing department shouldn't be the reason your incident got harder to debug.

Per-event pricing also makes bills impossible to predict. A bad deploy that generates 40,000 events in an hour is already a bad day. It shouldn't also be an invoice shock next month.

Why not flat feature tiers

"Radar Starter, Radar Pro, Radar Enterprise, $x / $y / $z" is the path of least resistance. We have tiers - Free, Team, Enterprise - but the axis we price on inside those tiers is still per-cluster.

Flat-fee tiering has two failure modes. The first is that a two-cluster customer and a forty-cluster customer pay the same, which is absurd on both ends: the small customer overpays for capacity they don't use, the large customer gets a windfall we can't afford. The second is that sales conversations devolve into negotiating what's in each tier. We'd rather the conversation be "how many clusters?" than "can we add SAML to Team for $50 extra?"

Why per cluster won

Three reasons, in decreasing order of importance.

It aligns with our costs. For every cluster connected to Radar Cloud, we hold open one long-lived WebSocket tunnel, route browser requests through it, and store one cluster's worth of registry, audit, and billing state in our control plane. When a customer adds a cluster, our cost goes up roughly linearly. When they add an engineer, our cost goes up by zero. Pricing that matches cost isn't greedy - it's how we avoid a subsidy war between small customers and large ones.

It aligns with customer value. Ask a platform lead "what does Radar save you?" and the answer is usually something like "we catch bad deploys in staging before they hit prod." That value doesn't scale with team size. It scales with how much infrastructure is under observation. One cluster = one production surface where something can break. That's the thing we should charge for.

It rewards broad adoption. A $99 monthly bill that lets your entire team (and the on-call rotation, and the security reviewer, and the skeptical manager) into the dashboard produces dramatically better outcomes than a $99 bill that gets shared across two seats. The tool works when more people look at it. Our pricing should pay for that, not tax it.

Where per-cluster is a worse deal

I'd rather tell you this than have you discover it.

If you run one giant cluster. A single 400-node cluster with 30 tenants feels under-priced at $99/month on Team, because per-cluster pricing doesn't care how big the cluster is. That's a legitimate "we're getting a great deal" for the customer - and fine by us, because our cost is still the per-cluster cost.

If you run many tiny dev clusters. The flip side. A team with ten short-lived kind clusters for CI runners pays as much as a team with ten prod clusters. We're watching this and considering a "dev cluster" discount if the usage data says to, but we haven't landed on clean criteria that couldn't be gamed. For now: if you have a fleet of ephemeral CI clusters, pin them through one long-lived Radar-connected cluster or talk to us.

If you need SCIM but have one cluster. SCIM 2.0 provisioning ships on Enterprise, which is custom-priced. For a single-cluster team that happens to need SCIM for IT-automation reasons (SAML / OIDC SSO itself is on every plan at published pricing), that's a painful jump from Team. We know. If this is you, talk to us - we've been doing one-off custom plans on a case-by-case basis while we figure out whether it's the tier or the price that needs to flex.

What will change the pricing

We're not precious about any of this.

We'll revisit per-cluster if the data shows it misallocates cost - if one customer's cluster is consistently ten times more expensive to run than the average, or if the "dev cluster" complaint turns into a real pattern instead of an edge case. We'll revisit the tier prices if our infrastructure costs shift materially. We'll grandfather existing customers when we do. We'll announce changes in the blog, not in an email nobody reads.

One thing we won't change: visibility tools get better when more people look at them. That's the whole product. It's also why per-seat was off the table before we had a pricing meeting.

If you're evaluating Radar Cloud and the pricing math feels off for your specific fleet shape, tell us. We'd rather know.

Keep reading

SSO and RBAC Without Passing Around Kubeconfigs

A contractor needs read-only access to one staging cluster by Monday. Without SSO and scoped RBAC, that ticket bounces for a week. Here's how Radar Cloud collapses it.

The Fleet Visibility Gap: Why Teams With 5+ Clusters Hit a Wall

Every tool that worked at 2 clusters breaks at 8. kubectl, Lens, k9s, Headlamp are all single-cluster-at-a-time. Here's where the wall is and what it looks like.

Introducing Radar Cloud: Multi-Cluster Kubernetes Visibility for Teams

Radar Cloud is the hosted team layer on top of Radar OSS: fleet views, SSO on every plan, K8s-native RBAC via impersonation. Cluster state never leaves.