Kubernetes deletes events after an hour. Radar doesn't.

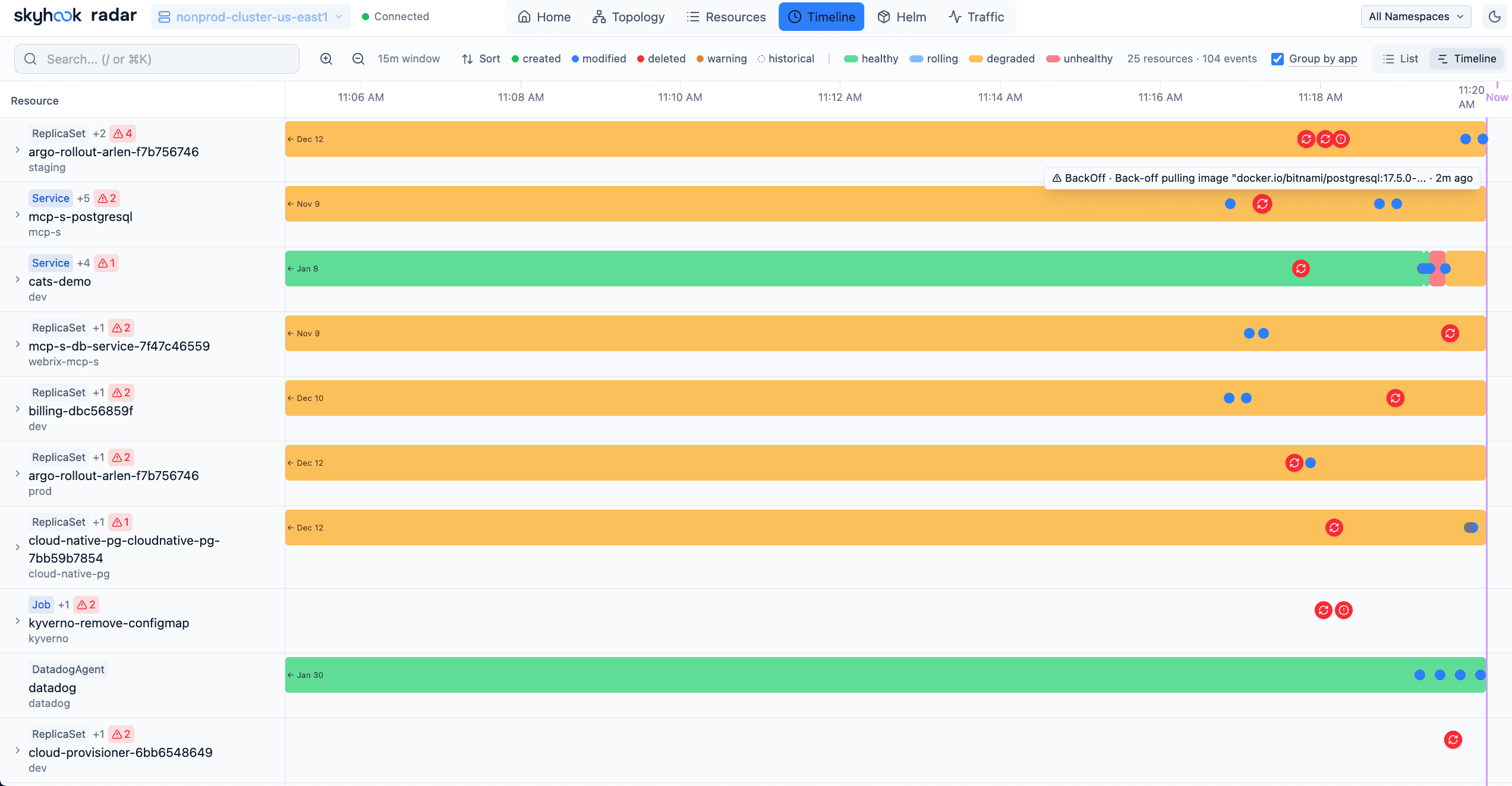

Every event, every resource delta, captured as it fires via Kubernetes Watch - held in memory (or SQLite) so the story is still there when the pager goes off hours later.

The 1-hour TTL and the 2am page.

The kube-apiserver flag that controls event retention is --event-ttl, and the default is one hour. After that, events are garbage-collected from etcd.

On managed clusters (GKE, EKS, AKS), you often can't change it. On self-managed clusters you can crank it up, but raising the TTL linearly inflates etcd and eventually hurts control-plane latency. The whole point of the default is don't use your API server as an audit log.

Which means: the pod crashed at 2pm, the pager fires at midnight, the events that would tell you why are already gone. You're debugging from the current state only, guessing backwards from a cluster that's quieted down.

Radar captures events the instant they fire, via Watch. Whatever the API server deletes in an hour, Radar has already seen and kept.

Six things the timeline does well.

Every event, deduplicated

Kubernetes emits events in batches - one FailedScheduling event can fire thirty times in ten seconds. Radar collapses repeats by resource + reason + message and shows a count, so the timeline is readable at a glance.

Resource deltas with diffs

When a Deployment's replica count jumps from 3 to 10 at 2:02pm, the timeline shows the exact delta. Container image bumps, annotation changes, configmap edits - all captured as structured changes, not free-text log lines.

Scroll past the 1-hour TTL

kube-apiserver's `--event-ttl` defaults to one hour. After that, events are garbage-collected from etcd. Radar captures them via Watch the moment they fire, so the history survives regardless of what the API server has retained.

Memory by default, SQLite on demand

In-memory is fast and fits the local-debugging shape. Pass `--timeline-storage sqlite` and Radar persists to a local file, so the history survives restarts of the binary. Still local, still yours.

Filter hard, URL-preserved

By type (Normal / Warning), namespace, resource kind, reason (OOMKilled, FailedMount, Unhealthy), or free-text search. Filters live in the URL, so pasting a timeline URL in Slack opens the same filtered view on the next engineer's screen.

Live push via SSE

New events stream into the view as they happen. No polling, no refresh button - the timeline extends itself. Stable under light churn, handles the firehose during a bad rollout.

Events with context, not just strings.

Every entry is structured: the involved object, the reason, the count, the first and last timestamps, the kubelet or controller that emitted it. Click into any entry and the resource detail opens with the state of the world at that moment.

When you scrub the timeline back two hours, the resource view reflects what things looked like then - replica counts, labels, annotations, status conditions. Time travel for your cluster.

{

"type": "Warning",

"reason": "OOMKilled",

"involvedObject": {

"kind": "Pod",

"name": "api-7d9f-k2pxq",

"namespace": "api"

},

"message": "Container api exceeded memory limit (512Mi)",

"firstTimestamp": "2026-03-10T14:01:47Z",

"count": 1,

"source": {

"component": "kubelet",

"host": "ip-10-0-4-212.ec2.internal"

}

}Why the timeline holds up under a bad rollout.

Watch API, not polling

SharedInformers set up Watch connections against the apiserver. When an event fires, the informer fires a callback. Radar captures it before it's subject to TTL pressure.

Two storage modes

In-memory by default - fast, fits the local use-case, gone on restart. --timeline-storage sqlite persists to a local file so history survives between sessions.

Diffs, not blobs

Resource changes land as structured deltas - what fields moved, from what to what. That's how Radar can show you the exact ConfigMap edit that preceded the OOMKill, not just “something changed.”

Apache 2.0. Yours to inspect, fork, or self-host.

Radar's source is on GitHub. Every feature on this page is in the binary you install with brew install. No telemetry, no mandatory login, no phone-home. If we ever change that, you'll see it in a diff first.

Radar Cloud shares the timeline with your whole team.

Radar runs in your cluster and persists the event timeline locally. Radar Cloud gives your whole team shared access via a single signed-in app - same per-cluster history, surfaced through one UI instead of one engineer's laptop.

See the OSS vs Cloud comparisonFour more things Radar does in the same binary.

Live topology graph

Every resource and connection, laid out by ELK.js, updated via SSE.

Image filesystem viewer

Browse any container image tree without kubectl exec or docker pull.

Cluster audit

31 best-practice checks across security, reliability, and efficiency.

AI via MCP

Give Claude, Cursor, or Copilot a safe, token-optimized view of your cluster.

Stop debugging incidents from memory.

The timeline is there. You just need Radar to show it to you.

Apache 2.0 OSS · Unlimited clusters self-hosted · Hosted free tier for up to 3 clusters